BIN-Storing data

Storing Data

Keywords: Representation of data; common data formats

Contents

This unit is under development. There is some contents here but it is incomplete and/or may change significantly: links may lead to nowhere, the contents is likely going to be rearranged, and objectives, deliverables etc. may be incomplete or missing. Do not work with this material until it is updated to "live" status.

Abstract

...

This unit ...

Prerequisites

You need to complete the following units before beginning this one:

Objectives

...

Outcomes

...

Deliverables

- Time management: Before you begin, estimate how long it will take you to complete this unit. Then, record in your course journal: the number of hours you estimated, the number of hours you worked on the unit, and the amount of time that passed between start and completion of this unit.

- Journal: Document your progress in your Course Journal. Some tasks may ask you to include specific items in your journal. Don't overlook these.

- Insights: If you find something particularly noteworthy about this unit, make a note in your insights! page.

Evaluation

Evaluation: TBD

- This unit can be submitted for evaluation for a maximum of 5 marks. Details TBD.

Contents

Task:

- Read the introductory notes on concepts about storing data for bioinformatics.

Any software project requires modelling on many levels - data-flow models, logic models, user interaction models and more. But all of these ultimately rely on a data model that defines how the world is going to be represented in the computer for the project's purpose. The process of abstraction of data entities and defining their relationships can (and should) take up a major part of the project definition, often taking several iterations until you get it right. Whether your data can be completely described, consistently stored and efficiently retrieved is determined to a large part by your data model.

Databases can take many forms, from memories in your brain, to shoe-cartons under your bed, to software applications on your computer, or warehouse-sized data centres. Fundamentally, these all do the same thing: collect information and make it available.

Let us consider collecting information on APSES-domain transcription factors in various fungi, with the goal of being able to compare them. Let's specify this as follows:

- cross reference the source databases;

- study if they have the same features (e.g. domains);

- and compare the features.

The underlying information can easily be retrieved for a protein from its RefSeq or UniProt entry.

Text files

A first attempt to organize the data might be simply to write it down in a large text file:

name: Mbp1 refseq ID: NP_010227 uniprot ID: P39678 species: Saccharomyces cerevisiae taxonomy ID: 4392 sequence: MSNQIYSARYSGVDVYEFIHSTGSIMKRKKDDWVNATHILKAANFAKAKR TRILEKEVLKETHEKVQGGFGKYQGTWVPLNIAKQLAEKFSVYDQLKPLF DFTQTDGSASPPPAPKHHHASKVDRKKAIRSASTSAIMETKRNNKKAEEN QFQSSKILGNPTAAPRKRGRPVGSTRGSRRKLGVNLQRSQSDMGFPRPAI PNSSISTTQLPSIRSTMGPQSPTLGILEEERHDSRQQQPQQNNSAQFKEI DLEDGLSSDVEPSQQLQQVFNQNTGFVPQQQSSLIQTQQTESMATSVSSS PSLPTSPGDFADSNPFEERFPGGGTSPIISMIPRYPVTSRPQTSDINDKV NKYLSKLVDYFISNEMKSNKSLPQVLLHPPPHSAPYIDAPIDPELHTAFH WACSMGNLPIAEALYEAGTSIRSTNSQGQTPLMRSSLFHNSYTRRTFPRI FQLLHETVFDIDSQSQTVIHHIVKRKSTTPSAVYYLDVVLSKIKDFSPQY RIELLLNTQDKNGDTALHIASKNGDVVFFNTLVKMGALTTISNKEGLTAN EIMNQQYEQMMIQNGTNQHVNSSNTDLNIHVNTNNIETKNDVNSMVIMSP VSPSDYITYPSQIATNISRNIPNVVNSMKQMASIYNDLHEQHDNEIKSLQ KTLKSISKTKIQVSLKTLEVLKESSKDENGEAQTNDDFEILSRLQEQNTK KLRKRLIRYKRLIKQKLEYRQTVLLNKLIEDETQATTNNTVEKDNNTLER LELAQELTMLQLQRKNKLSSLVKKFEDNAKIHKYRRIIREGTEMNIEEVD SSLDVILQTLIANNNKNKGAEQIITISNANSHA length: 833 Kila-N domain: 21-93 Ankyrin domains: 369-455, 505-549 ...

... and save it all in one large text file and whenever you need to look something up, you just open the file, look for e.g. the name of the protein and read what's there. Or - for a more structured approach, you could put this into several files in a folder.[1] This is a perfectly valid approach and for some applications it might not be worth the effort to think more deeply about how to structure the data, and store it in a way that it is robust and scales easily to large datasets. Alas, small projects have a tendency to grow into large projects and if you work in this way, it's almost guaranteed that you will end up doing many things by hand that could easily be automated. Imagine asking questions like:

- How many proteins do I have?

- What's the sequence of the Kila-N domain?

- What percentage of my proteins have an Ankyrin domain?

- Or two ...?

Answering these questions "by hand" is possible, but tedious.

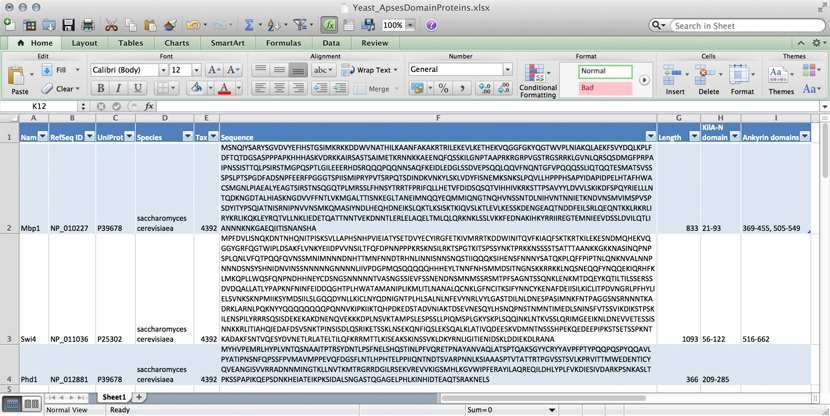

Spreadsheets

Many serious researchers keep their project data in spreadsheets. Often they use Excel, or an alternative like the free OpenOffice Calc, or Google Sheets, both of which are compatible with Excel and have some interesting advantages. Here, all your data is in one place, easy to edit. You can even do simple calculations - although you should never use Excel for statistics[2]. You could answer What percentage of my proteins have an Ankyrin domain? quite easily[3].

There are two major downsides to spreadsheets. For one, complex queries need programming. There is no way around this. You can program inside Excel with Visual Basic. But you might as well export your data so you can work on it with a "real" programming language. The other thing is that Excel does not scale very well. Once you have more than a hundred proteins in your spreadsheet, you can see how finding anything can become tedious.

However, just because it was built for business applications, and designed for use by office assistants, does not mean it is intrinsically unsuitable for our domain. It's important to be pragmatic, not dogmatic, when choosing tools: choose according to your real requirements. Sometimes "quick and dirty" is just fine, because quick.

R

R can keep complex data in data frames and lists. If we do data analysis with R, we have to load the data first. We can use any of the read.table() functions for structured data, read lines of raw text with readLines(), or slurp in entire files with scan(). But we could also keep the data in an R object in the first place that we can read from disk, analyze, modify, and write back. In this case, R becomes our database engine.

# Sample construction of an R database table as a dataframe

# Data for the Mbp1 protein

proteins <- data.frame(

name = "Mbp1",

refSeq = "NP_010227",

uniProt = "P39678",

species = "Saccharomyces cerevisiae",

taxId = "4392",

sequence = paste(

"MSNQIYSARYSGVDVYEFIHSTGSIMKRKKDDWVNATHILKAANFAKAKR",

"TRILEKEVLKETHEKVQGGFGKYQGTWVPLNIAKQLAEKFSVYDQLKPLF",

"DFTQTDGSASPPPAPKHHHASKVDRKKAIRSASTSAIMETKRNNKKAEEN",

"QFQSSKILGNPTAAPRKRGRPVGSTRGSRRKLGVNLQRSQSDMGFPRPAI",

"PNSSISTTQLPSIRSTMGPQSPTLGILEEERHDSRQQQPQQNNSAQFKEI",

"DLEDGLSSDVEPSQQLQQVFNQNTGFVPQQQSSLIQTQQTESMATSVSSS",

"PSLPTSPGDFADSNPFEERFPGGGTSPIISMIPRYPVTSRPQTSDINDKV",

"NKYLSKLVDYFISNEMKSNKSLPQVLLHPPPHSAPYIDAPIDPELHTAFH",

"WACSMGNLPIAEALYEAGTSIRSTNSQGQTPLMRSSLFHNSYTRRTFPRI",

"FQLLHETVFDIDSQSQTVIHHIVKRKSTTPSAVYYLDVVLSKIKDFSPQY",

"RIELLLNTQDKNGDTALHIASKNGDVVFFNTLVKMGALTTISNKEGLTAN",

"EIMNQQYEQMMIQNGTNQHVNSSNTDLNIHVNTNNIETKNDVNSMVIMSP",

"VSPSDYITYPSQIATNISRNIPNVVNSMKQMASIYNDLHEQHDNEIKSLQ",

"KTLKSISKTKIQVSLKTLEVLKESSKDENGEAQTNDDFEILSRLQEQNTK",

"KLRKRLIRYKRLIKQKLEYRQTVLLNKLIEDETQATTNNTVEKDNNTLER",

"LELAQELTMLQLQRKNKLSSLVKKFEDNAKIHKYRRIIREGTEMNIEEVD",

"SSLDVILQTLIANNNKNKGAEQIITISNANSHA",

sep=""),

seqLen = 833,

KilAN = "21-93",

Ankyrin = "369-455, 505-549",

stringsAsFactors = FALSE)

# add data for the Swi4 protein

proteins <- rbind(proteins,

data.frame(

name = "Swi4",

refSeq = "NP_011036",

uniProt = "P25302",

species = "Saccharomyces cerevisiae",

taxId = "4392",

sequence = paste(

"MPFDVLISNQKDNTNHQNITPISKSVLLAPHSNHPVIEIATYSETDVYEC",

"YIRGFETKIVMRRTKDDWINITQVFKIAQFSKTKRTKILEKESNDMQHEK",

"VQGGYGRFQGTWIPLDSAKFLVNKYEIIDPVVNSILTFQFDPNNPPPKRS",

"KNSILRKTSPGTKITSPSSYNKTPRKKNSSSSTSATTTAANKKGKKNASI",

"NQPNPSPLQNLVFQTPQQFQVNSSMNIMNNNDNHTTMNFNNDTRHNLINN",

"ISNNSNQSTIIQQQKSIHENSFNNNYSATQKPLQFFPIPTNLQNKNVALN",

"NPNNNDSNSYSHNIDNVINSSNNNNNGNNNNLIIVPDGPMQSQQQQQHHH",

"EYLTNNFNHSMMDSITNGNSKKRRKKLNQSNEQQFYNQQEKIQRHFKLMK",

"QPLLWQSFQNPNDHHNEYCDSNGSNNNNNTVASNGSSIEVFSSNENDNSM",

"NMSSRSMTPFSAGNTSSQNKLENKMTDQEYKQTILTILSSERSSDVDQAL",

"LATLYPAPKNFNINFEIDDQGHTPLHWATAMANIPLIKMLITLNANALQC",

"NKLGFNCITKSIFYNNCYKENAFDEIISILKICLITPDVNGRLPFHYLIE",

"LSVNKSKNPMIIKSYMDSIILSLGQQDYNLLKICLNYQDNIGNTPLHLSA",

"LNLNFEVYNRLVYLGASTDILNLDNESPASIMNKFNTPAGGSNSRNNNTK",

"ADRKLARNLPQKNYYQQQQQQQQPQNNVKIPKIIKTQHPDKEDSTADVNI",

"AKTDSEVNESQYLHSNQPNSTNMNTIMEDLSNINSFVTSSVIKDIKSTPS",

"KILENSPILYRRRSQSISDEKEKAKDNENQVEKKKDPLNSVKTAMPSLES",

"PSSLLPIQMSPLGKYSKPLSQQINKLNTKVSSLQRIMGEEIKNLDNEVVE",

"TESSISNNKKRLITIAHQIEDAFDSVSNKTPINSISDLQSRIKETSSKLN",

"SEKQNFIQSLEKSQALKLATIVQDEESKVDMNTNSSSHPEKQEDEEPIPK",

"STSETSSPKNTKADAKFSNTVQESYDVNETLRLATELTILQFKRRMTTLK",

"ISEAKSKINSSVKLDKYRNLIGITIENIDSKLDDIEKDLRANA",

sep=""),

seqLen = 1093,

KilAN = "56-122",

Ankyrin = "516-662",

stringsAsFactors = FALSE)

)

# how many proteins?

nrow(proteins)

#what are their names?

proteins[,"name"]

# how many do not have an Ankyrin domain?

sum(proteins[,"Ankyrin"] == "")

# save it to file

save(proteins, file="proteinData.Rda")

# delete it from memory

rm(proteins)

# check...

proteins # ... yes, it's gone

# read it back in:

load("proteinData.Rda")

# did this work?

sum(proteins[,"seqLen"]) # 1926 amino acids

# add another protein: Phd1

proteins <- rbind(proteins,

data.frame(

name = "Phd1",

refSeq = "NP_012881",

uniProt = "P39678",

species = "Saccharomyces cerevisiae",

taxId = "4392",

sequence = paste(

"MPFDVLISNQKDNTNHQNITPISKSVLLAPHSNHPVIEIATYSETDVYEC",

"MYHVPEMRLHYPLVNTQSNAAITPTRSYDNTLPSFNELSHQSTINLPFVQ",

"RETPNAYANVAQLATSPTQAKSGYYCRYYAVPFPTYPQQPQSPYQQAVLP",

"YATIPNSNFQPSSFPVMAVMPPEVQFDGSFLNTLHPHTELPPIIQNTNDT",

"SVARPNNLKSIAAASPTVTATTRTPGVSSTSVLKPRVITTMWEDENTICY",

"QVEANGISVVRRADNNMINGTKLLNVTKMTRGRRDGILRSEKVREVVKIG",

"SMHLKGVWIPFERAYILAQREQILDHLYPLFVKDIESIVDARKPSNKASL",

"TPKSSPAPIKQEPSDNKHEIATEIKPKSIDALSNGASTQGAGELPHLKIN",

"HIDTEAQTSRAKNELS",

sep=""),

seqLen = 366,

KilAN = "209-285",

Ankyrin = "", # No ankyrin domains annotated here

stringsAsFactors = FALSE)

)

# check:

proteins[,"name"] #"Mbp1" "Swi4" "Phd1"

sum(proteins[,"Ankyrin"] == "") # Now there is one...

sum(proteins[,"seqLen"]) # 2292 amino acids

# [END]

The third way to use R for data is to connect it to a "real" database:

- a relational database like mySQL, MariaDB, or PostgreSQL;

- an object/document database like {{WP|MongoDB};

- or even a graph-database like Neo4j.

R "drivers" are available for all of these. However all of these require installing extra software on your computer: the actual database, which runs as an independent application. If you need a rock-solid database with guaranteed integrity, industry standard performance, and scalability to even very large datasets and hordes of concurrent users, don't think of rolling your own solution. One of the above is the way to go.

MySQL and friends

MySQL is a free, open relational database that powers some of the largest corporations as well as some of the smallest laboratories. It is based on a client-server model. The database engine runs as a daemon in the background and waits for connection attempts. When a connection is established, the server process establishes a communication session with the client. The client sends requests, and the server responds. One can do this interactively, by running the client program /usr/local/mysql/bin/mysql (on Unix systems). Or, when you are using a program such as R, Python, Perl, etc. you use the appropriate method calls or functions—the driver—to establish the connection.

These types of databases use their own language to describe actions: SQL - which handles data definition, data manipulation, and data control.

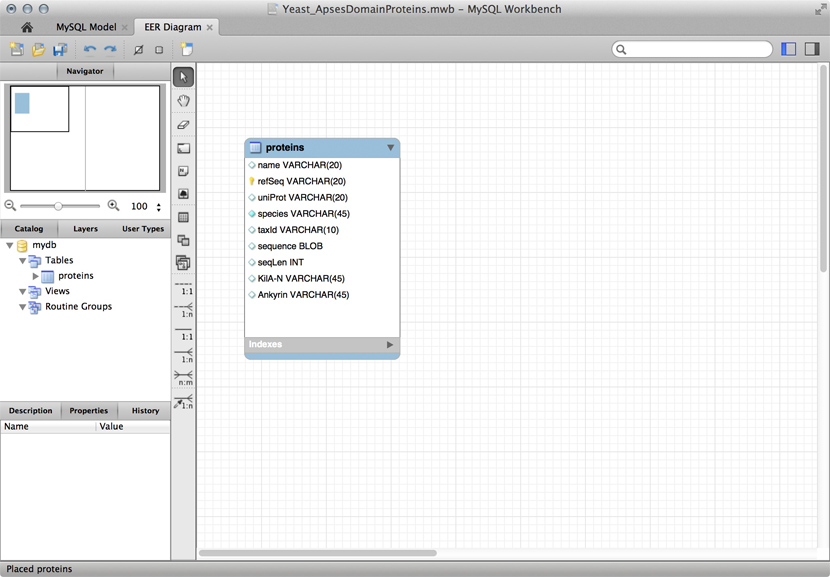

Just for illustration, the Figure above shows a table for our APSES domain protein data, built as a table in the MySQL workbench application and presented as an Entity Relationship Diagram (ERD). There is only one entity though - the protein "table". The application can generate the actual code that implements this model on a SQL compliant database:

CREATE TABLE IF NOT EXISTS `mydb`.`proteins` (

`name` VARCHAR(20) NULL,

`refSeq` VARCHAR(20) NOT NULL,

`uniProt` VARCHAR(20) NULL,

`species` VARCHAR(45) NOT NULL COMMENT ' ',

`taxId` VARCHAR(10) NULL,

`sequence` BLOB NULL,

`seqLen` INT NULL,

`KilA-N` VARCHAR(45) NULL,

`Ankyrin` VARCHAR(45) NULL,

PRIMARY KEY (`refSeq`))

ENGINE = InnoDB

This looks at least as complicated as putting the model into R in the first place. Why then would we do this, if we need to load it into R for analysis anyway. There are several important reasons.

- Scalability: these systems are built to work with very large datasets and optimized for performance. In theory R has very good performance with large data objects, but not so when the data becomes larger than what the computer can keep in memory all at once.

- Concurrency: when several users need to access the data potentially at the same time, you must use a "real" database system. Handling problems of concurrent access is what they are made for.

- ACID compliance. ACID describes four aspects that make a database robust, these are crucial for situations in which you have only partial control over your system or its input, and they would be quite laborious to implement for your hand built R data model:

- Atomicity: Atomicity requires that each transaction is handled "indivisibly": it either succeeds fully, with all requested elements, or not at all.

- Consistency: Consistency requires that any transaction will bring the database from one valid state to another. In particular any data-validation rules have to be enforced.

- Isolation: Isolation ensures that any concurrent execution of transactions results in the exact same database state as if transactions would have been executed serially, one after the other.

- Durability: The Durability requirement ensures that a committed transaction remains permanently committed, even in the event that the database crashes or later errors occur. You can think of this like an "autosave" function on every operation.

All the database systems I have mentioned above are ACID compliant[4].

Store Data

A System datamodel for the YFO Cell cycle

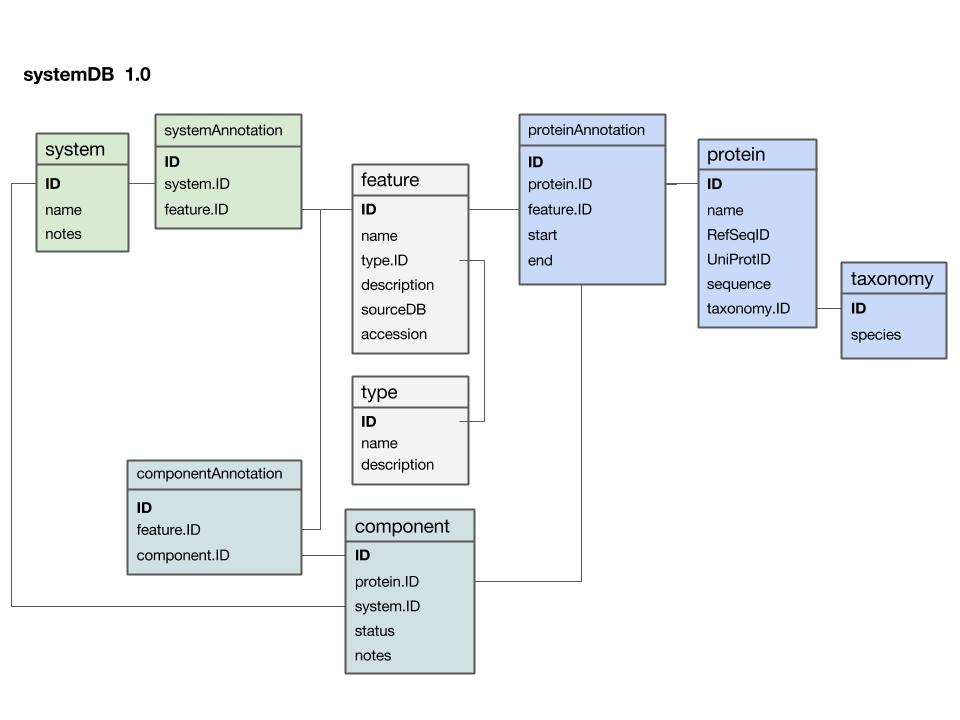

Here is a first version of a systems data model, based on what we discussed in class:

- The

featuretable is at the centre. This was not intentional, but emerged from iterating the model through a number of revisions. It emphasizes that the main purpose of this model is to integrate and annotate data from various sources. Feature in the way we understand it here is an abstraction of quite disparate categories of information items. This includes domain annotations, system functions, literature references, and cross-references to other databases. The type attribute will require some thought: this attribute really needs a "controlled vocabulary" to ensure that the same category is described consistently with the same string ("PubMed"? "Lit."? "reference"?). There are a number of ways to achieve this, the best way[5] is to store these types in their own "reference" tabletype- and link to that table via a foreign key. - The

proteintable is at the centre. Its primary key is a unique integer. We store the NCBI RefSeq ID and the Uniprot ID in the table. We would not call these "foreign keys", since the information they reference is not in our schema but at the NCBI resp. EBI. For example, we can't guarantee that they are unique keys. - The

taxonomytable holds information about species. We use the NCBI taxonomy ID as its primary key. The same key appears in the protein table as the foreign key that links the protein with its proper taxonomy information. This is an instance where the data model actually does not describe reality well. The problem is that particular proteins that we might retrieve from database searches will often be annotated to a specific strain of a species – and there is no easy way to reference strains to species. We'll see whether this turns out to be a problem in practice. But it may be that an additional table may be required that stores parent/child relationships of the taxonomic tree of life. - The

protein_featuretable links a protein with all the features that we annotate it with. start and end coordinates identify the region of the sequence we have annotated. - The

...Annotationtables link feature-information with annotated entities. - Should the

systemtable have its own taxonomy attribute? Or should the species in which the system is observed be inferred from the component protein'staxonomy.ID? What do you think? I decided not to add a taxonomy attribute to that table. How would you argue for or against this decision? - The

componenttable links proteins that collaborate together as a system. There is an implicit assumption in this model that only proteins are system components (and not e.g. RNA), and that components are atomic (i.e. we can't link to subsystems). How would you change the model to accommodate more realistic biological complexity?

Implementing the Data Model in R

To actually implement the data model in R we will create the tables as data frames, and we will collect them in a list. We don't have to keep the tables in a list - we could also keep them as independent objects, but with a table named "protein" we should be worried of inadvertently overwriting the table. A list is neater, more flexible (we might want to have several of these), it reflects our intent about the model better, and doesn't require very much more typing. For now, to keep things simple, we will implement only two tables: protein and taxonomy. We'll add the rest when we actually need them.

As you see we can edit any component of our data model directly by assigning new values to the element. But in general that's a really bad idea, since it is far too easy to bring the whole model into an inconsistent state. It's much better to write functions that get and set data elements, not only to keep the data consistent, but also because it is much easier, if the model ever changes, to simply edit the function code, rather than having to repeat every single data entry by hand.

What would an setData function have to look like? It should

- create a new entry if the requested row of a table does not exist yet;

- update data if the protein exists;

- perform consistency checks (i.e. check that the data has the correct type);

- perform sanity checks (i.e. check that data values fall into the expected range);

- perform completeness checks (i.e. handle incomplete data)

Let's start simple, and create a set- function to add new values to existing sequence data. Also, for clarity, we'll forgo many checks. The first thing we should do is to add the actual sequence.

We only entered a placeholder for the sequence field. Sequences come in many different flavours when we copy them from a Webpage: there can be whitespace, carriage returns, numbers, they can be upper-case, lower-case mixed-case ...

What we want in our sequence data field is one string that contains the entire sequence, and nothing but upper-case, amino-acid code letters.

We'll need to look at how we work with strings in R, how we identify and work with patterns in strings. This is a great time to introduce regular expressions.

Updating the database

Task:

- study the code in the

Updating the databasesection of the R script

Add "your" YFO Sequence

Task:

- Add the YFO Mbp1 protein data to the database

- Copy the code template to add a new protein and its taxonomy entry into the script file

myCode.Rthat you created at the very beginning. - Add your protein to the database by editing a copy of the code template in your script file. Ask on the mailing list if you don't know how (but be specific) or if you don't know how to find particular information items.

- Add the taxonomy entry to the taxonomy table, again simply modifying a copy of the code template in your own script file.

Task:

- Create your own version of the protein database by adding all the genes from YFO that you have discovered with the PSI-BLAST search for the APSES domain. Save it.

Further reading, links and resources

Notes

- ↑ Your operating system can help you keep the files organized. The "file system" is a database.

- ↑ For real: Excel is miserable and often wrong on statistics, and it makes horrible, ugly plots. See here and here why Excel problems are not merely cosmetic.

- ↑ At the bottom of the window there is a menu that says "sum = ..." by default. This provides simple calculations on the selected range. Set the choice to "count", select all Ankyrin domain entries, and the count shows you how many cells actually have a value.

- ↑ For a list of relational Database Management Systems, see here.

- ↑ Relational databases like MySQL, PostgresQL, and MariaDB offer the datatype "Enum" for this purpose but this is an inferior solution. Enum types need to be fixed at the time the schema is created, they can't store information about their semantics, i.e. how the keywords are defined and when they should be used, and they can't be used in more than one table, since they are metadata of one particular column.

Self-evaluation

If in doubt, ask! If anything about this learning unit is not clear to you, do not proceed blindly but ask for clarification. Post your question on the course mailing list: others are likely to have similar problems. Or send an email to your instructor.

About ...

Author:

- Boris Steipe <boris.steipe@utoronto.ca>

Created:

- 2017-08-05

Modified:

- 2017-08-05

Version:

- 0.1

Version history:

- 0.1 First stub

![]() This copyrighted material is licensed under a Creative Commons Attribution 4.0 International License. Follow the link to learn more.

This copyrighted material is licensed under a Creative Commons Attribution 4.0 International License. Follow the link to learn more.